Any lover of mathematics and programming is sure to have come across the term "gradient descent" before. In short, it is an algorithm for calculating the parameters of a simple machine learning model, linear regression. In this post, I'll explain the underlying mathematics of the algorithm and show my quick implementation in Python.

We want to use the idea of least squared residuals. A residual is just the difference between the actual value $y$ and the value predicted by the model, $h(x_i)$, hence the $h(x_i)-y_i$ in the expression. However, we need to square them so that negative (underestimates) and positive (overestimates) values are treated in the same way. By squaring instead of taking the absolute value (which is still possible, a technique known as L1 regression), 1) the maths becomes easier by taking the derivative of a polynomial instead of an absolute value and more importantly, 2) we "punish" errors more severely for values that are very inaccurate, so that it is better to miss by a little many times than to miss by a lot few times. $$ J(\theta_0, \theta_1)=\frac{1}{2m} \sum_{i=1}^m{(h(x_i)-y_i)^2} $$ Note that we have half the mean, $\frac{1}{2m}$, just to make our calculation of the partial derivative easier (as you will see, the 1/2 factor will be cancelled). Now, we will calculate the partial derivative of our cost function with respect to the two parameters $\theta_0$ and $\theta_1$. Don't worry, it's not hard at all! $$ \begin{aligned} \\ J'_{\theta_0} &= \frac{\partial}{\partial\theta_0}\frac{1}{2m} \sum_{i=1} ^ m{(h(x_i)-y_i)^2} \\ &=\frac{1}{2m} \sum_{i=1} ^ m \frac{\partial}{\partial\theta_0}((\theta_1x_i+\theta_0)-y_i)^2 \\ &= \frac{1}{2m} \sum_{i=1} ^ m 2(\theta_1x_i+\theta_0)-y_i)\cdot1 \\ &= \frac{1}{m} \sum_{i=1} ^ m (h_{\theta}(x_i)-y_i) \end{aligned} $$ $$ \begin{aligned} \\ J'_{\theta_1} &= \frac{\partial}{\partial\theta_1}\frac{1}{2m} \sum_{i=1} ^ m{(h(x_i)-y_i)^2} \\ &=\frac{1}{2m} \sum_{i=1} ^ m \frac{\partial}{\partial\theta_1}((\theta_1x_i+\theta_0)-y_i)^2 \\ &= \frac{1}{2m} \sum_{i=1} ^ m 2(\theta_1x_i+\theta_0)-y_i)\cdot x_i \\ &= \frac{1}{m} \sum_{i=1} ^ m ((h_{\theta}(x_i)-y_i)x_i) \end{aligned} $$ As you can see, the only difference between the two derivatives is when we differentiate our hypothesis, $h(x_i)=\theta_1 x_i+ \theta_0$. With respect to $\theta_0$, $\theta_1 x_i$ is just a constant so the derivative is the derivative of $\theta_0$, or $1$. With respect to $\theta_1$, $\theta_0$ is a constant, so the derivative is just the derivative of $\theta_1x_i$, or $x_i$.

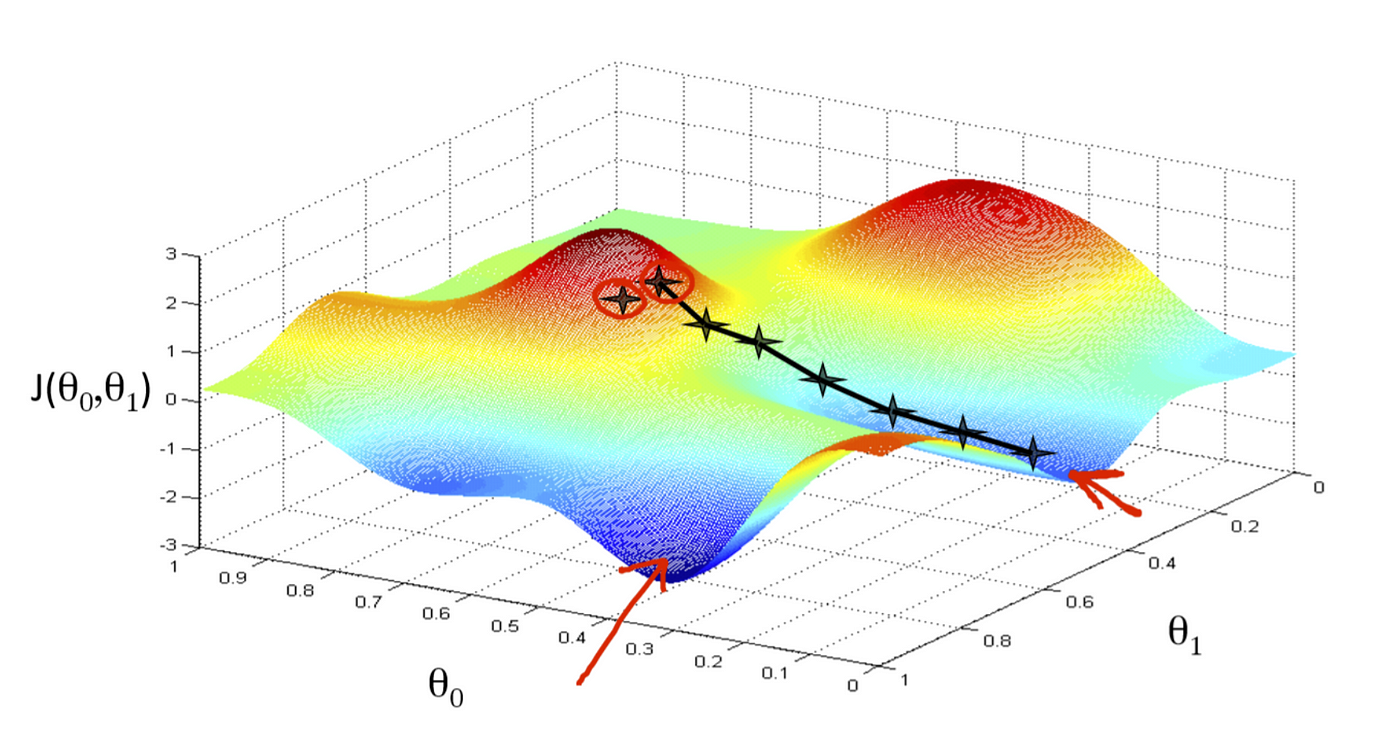

Now that we have competed the partial derivatives of the least squares residuals function $J(\theta_0, \theta_1)$ we want to write an algorithm that will iteratively approach the least value of this function. Essentially, on every iteration, we want to update the value of $\theta_j$ with the current value subtracted by the derivative (to descend to the next "step" in the function) multiplied by the learning rate, $\alpha$, that determines how much we should move by on each step.

$$\\ \theta_j := \theta_j - \alpha\frac{\partial}{\partial\theta_j} J(\theta_0,\theta_1)$$Here, $:=$ indicates the assignment operator, i.e. updating the value of $\theta_j$ with the new calculated value.

This is a very rough code that I wrote to implement the above algorithm without using any machine learning libraries such as sklearn. As you can see in the sample outputs below, it works quite well.

import matplotlib.pyplot as plt

import numpy as np

from math import isnan

def run(x, y):

t0, t1 = 0, 1 #initial values for parameters

m = len(x) #size of training set

alpha = 0.01 #alpha, learning rate

dp = 10 #desired level of decimal places to be deemed convergent

for i in range(10000):

h = lambda t0, t1, x: t1*x+t0 #define hypothesis, h(x) = t0 + t1 * x

dev0 = sum([(h(t0, t1, x[i])-y[i]) for i in range(m)]) #partial derivative

dev1 = sum([((h(t0, t1, x[i])-y[i])*x[i]) for i in range(m)]) #partial derivative

#simultaneous updating once derivatives have been computed

old_t0, old_t1 = t0, t1

t0 -= alpha*1/m*dev0

t1 -= alpha*1/m*dev1

print(t0, t1, old_t0, old_t1)

if isnan(t0) or isnan(t1): #diverge to +inf or -inf

print(f"Diverged to infinity, decrease alpha ({alpha})")

break

if ("{0}".format(round(old_t0, ndigits=dp)) == "{0}".format(round(t0, ndigits=dp))

and "{0}".format(round(old_t1, ndigits=dp)) == "{0}".format(round(t1, ndigits=dp))): #check precision

print(f"Converged after {i} iterations")

break

else: #loop was not broken after 10000 iterations, diverge up and down

print(f"Could not converge in 10000 iterations, decrease alpha ({alpha})")

return t0, t1

if __name__ == "__main__":

x = [i for i in range(9)]

y = [13, 11.2, 11.4, 8.3, 6.4, 1.2, 2.3, 0.1, -3]

# y = [2, 3.2, 3.6, 4.9, 5.2, 7.2, 9.0, 10, 13]

t0, t1 = run(x, y)

_x = np.linspace(min(x), max(x), num=100)

_y = t1*_x+t0

plt.plot(_x, _y, '-r', label=f'y={t1}x+{t0}')

plt.title(f'Regression: y={t1}x+{t0}')

plt.scatter(x, y)

plt.grid()

plt.show()

One thing to note is that because the gradient descent algorithm will automatically take smaller steps when it approaches the local minimum, there is no need to decrease $\alpha$ over time, and we can keep it fixed as $0.01$.

This dataset converged to 10 decimal places of accuracy in 6953 iterations:

Here is another sample run:

In the case where we have multiple features (such as the number of floors, number of garages, number of square metres, etc to predict the selling price of a house), we can redefine the features and values to be vectors.

$\theta=\begin{bmatrix}\theta_1 \\ \theta_2 \\ \vdots \\ \theta_n \end{bmatrix}$ and $x=\begin{bmatrix}x_1 \\ x_2 \\ \vdots \\ x_n \end{bmatrix}$ where $\theta_i$ are the features such as price per floor, price per square metre, price per garage, etc and $x_i$ are the values such as the number of floors, number of square metres, number of garages, etc.

Then, the new hypothesis function is given by $$\begin{aligned} h_\theta(x) &=\theta_0 x_0+\theta_1 x_1+....+\theta_n x_n \\ &= \begin{bmatrix}\theta_1 \\ \theta_2 \\ \vdots \\ \theta_n \end{bmatrix}\begin{bmatrix}\theta_1 \ \theta_2 \ \dots \ \theta_n \end{bmatrix} \\&=\theta^Tx \end{aligned}$$, where $\theta^T$ is the transpose of the column vector (and thus yields a row vector).

For convenience's sake, we will simplify the notation so that $J(\theta)=J(\theta_0, \theta_1, \theta_2...\theta_n)$. Then, it is obvious that the computation of the partial derivative has exactly the same form as in the case with a single parameter $\theta_1$, and so the algorithm for multivariate linear regression is given by $$\\ \theta_j := \theta_j - \alpha\frac{\partial}{\partial\theta_j} J(\theta)$$ for $j=0, 1, \dots n$.

There are various ways to clean the data so that the gradient descent algorithm can converge quickly. While I won't go into the details here, below are some of them:

There are still so many things we can do with gradient descent that it's impossible to cover it all in a single post. I could, for instance, do polynomial regression (instead of linear) by creating new features based on the polynomial (e.g. $x_i^2, x_i^3, etc$ and reducing it to a problem of multivariate linear regression. I could implement the code for this, and I could also include some contour plots to show the minimising cost function.

If you want to learn more of this cool machine learning stuff, go check out the Machine Learning course on Coursera by Andrew Ng, the cofounder of Coursera and a leading pioneer of machine learning.